State of the Call 2026: AI Deepfakes Are Here, Threatening 1 in 4 Americans with Sophisticated Voice Scams

Are you confident you can tell a real voice from an AI deepfake? Honestly, the latest information suggests most of us can't. And the risks are bigger than ever, with a growing threat impacting a quarter of the population.

State of the Call 2026: The AI Deepfake Reality Check

Here's the deal: AI-powered deepfake voice scams aren't just a futuristic idea anymore; they're a real danger right now. The latest State of the Call 2026 report from Hiya reveals that these clever tricks are quickly making us lose trust in voice calls. This isn't just about losing money; it's a big financial and reputation risk for both businesses and individuals.

Because of this, we really need smart ways to spot these fakes and strong plans to protect ourselves.

Table of Contents

Watch the Video Summary

Quick Overview: The Eroding Trust in Voice Communication

I've noticed that many of us just don't trust our daily phone calls anymore. It's a clear sign that a huge 86% of unknown calls go unanswered (Hiya State of the Call Report). Why? Because people, including me, feel like scammers are always one step ahead of the ways we try to protect ourselves. It's a frustrating cycle where we feel more and more at risk.

AI has definitely made things more difficult. We're seeing more deepfake calls, and it's getting harder to tell what's real and what's made by AI. Many people aren't sure how realistic these AI voices truly are. This isn't just a feeling; there are serious warnings about it.

For example, experts at Gartner warned that by 2028, one in four job candidates around the world will be fake (Gartner, 2025 Warning). While these aren't all money scams, this number clearly shows how widely AI can be used for deception in many different areas, even when you're trying to get a job.

Technical Deep Dive: The AI Arms Race in Voice Scams and Detection

When I looked into the technical side, it became clear that we're in an "AI vs. AI" battle. AI-driven scams are now 'more complex, harder to spot, and faster to create' (KPMG 2026 Business Fraud Survey). This growing complexity means we really need strong ways to secure our voices. We've talked about this before, like with Pindrop's Battle Against Deepfake AI.

This isn't just talk; 81% of companies that faced fraud said AI was involved (KPMG 2026 Business Fraud Survey). The way AI can create deepfake audio is truly amazing, and that's exactly why it's so hard to detect.

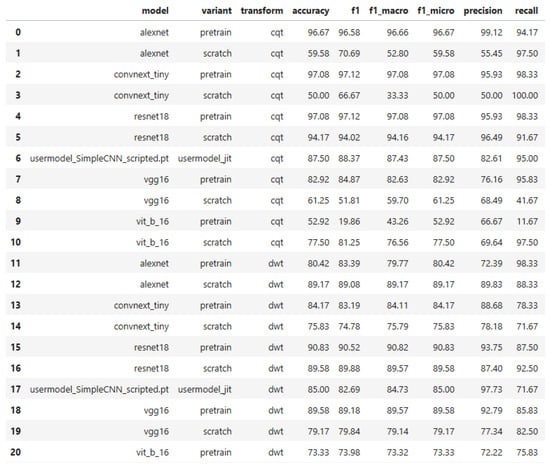

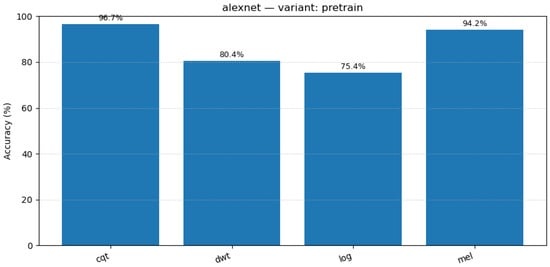

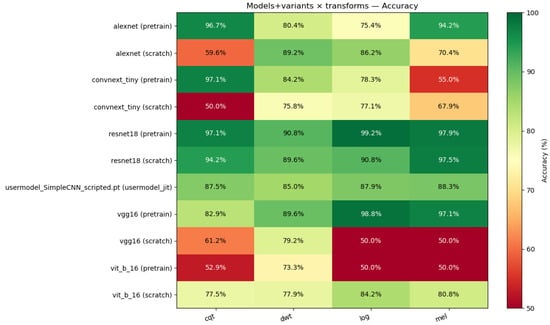

The good news is that the defense is also using AI. New tools like FakeVoiceFinder are appearing to fight back. They do this by carefully checking different ways to spot fake voices. It's like an 'AI vs. AI' battle, where researchers are trying out different AI models, looking at different ways to represent sound (like pictures of sound waves), and combining these methods (FakeVoiceFinder Abstract). This careful approach helps us understand what works best to find fake audio.

The Anatomy of a Deepfake Voice Scam: A Technical Breakdown

To truly understand the threat, it's essential to delve into the technical underpinnings of how AI deepfake voices are created and why they are so difficult to detect.

The Mechanics of Voice Cloning

Deepfake voices are fundamentally created by training machine learning models on extensive audio data to meticulously mimic a specific speaker. The core technology behind this is voice synthesis, frequently powered by sophisticated Generative Adversarial Networks (GANs). The process typically begins with the illicit collection of audio samples, which can be surprisingly brief—even a few seconds—and sourced from voicemails, social media videos, podcasts, customer service calls, or even hacked applications. These AI models, often employing encoder-decoder or diffusion-based architectures, then analyze the acoustic characteristics of the target's speech, such as pitch, tone, accent, pace, rhythm, cadence, inflection, and even subtle breathing patterns, to learn their unique "vocal fingerprint." Once trained, the AI can generate new speech. This involves converting text input into speech that sounds like the cloned voice (known as voice synthesis) or, in more advanced scenarios, transforming a live speaker's voice into the target's voice in real-time for interactive conversations (voice conversion). In GANs, a "generator" component creates the synthetic audio, while a "discriminator" component simultaneously evaluates its authenticity against the original voice sample, iteratively refining the output until it becomes highly realistic.

The Evolving Challenge of Deepfake Detection

Despite advancements in creation, detecting deepfake voices remains a significant technical challenge. Modern deepfake generators produce audio that so closely mimics the nuances of human speech, including natural intonation, pauses, and emotional tone, that distinguishing them from genuine recordings is increasingly tricky. Advanced AI models are capable of adapting to linguistic and contextual changes, further reducing any obvious signs of artificiality. This sophistication contributes to the alarmingly low human detection rate, with individuals typically identifying deepfake audio at approximately 48% accuracy. Furthermore, common audio compression techniques used in phone calls can inadvertently hide minor artifacts that might otherwise indicate synthetic generation, making the task for both human listeners and automated systems even harder.

AI Fraud Impact & Response Metrics

To give you a clearer picture of how big this problem is and how companies are fighting back, I've put together some key numbers:

| Metric | Impact/Effort | Source |

|---|---|---|

| Businesses Losing Profits to AI Fraud | 72% (1-5% annual profits) | KPMG 2026 Business Fraud Survey |

| Organizations Experiencing AI-Enabled Fraud | 81% | KPMG 2026 Business Fraud Survey |

| Companies Fighting AI with AI | 52% | KPMG 2026 Business Fraud Survey |

| Job Candidates Globally Fake by 2028 | 25% (1 in 4) | Gartner, 2025 Warning |

Real-World Success: Businesses Fighting Back and Rebuilding Trust

It's not all bad news. My research shows that companies are actively dealing with this threat. A big 52% of companies are 'fighting AI with AI' (KPMG 2026 Business Fraud Survey). They're using technology to find strange patterns, confirm who users are, and spot fake content. This proactive defense matches the advice in NIST's Urgent Mandate: A Practical Guide to Defending Against Deepfake Voice Security Threats, which stresses the importance of a clear plan for AI defense. This active approach is really important.

Also, companies are planning to spend more on this: 6 out of 10 companies plan to increase their fraud prevention budgets by up to 7% this year (KPMG 2026 Business Fraud Survey). We're seeing real results too. For example, the British Columbia Lottery Corporation (BCLC) managed to get more people to answer calls, make campaigns more effective, and earn more money by using branded calls (Hiya State of the Call Report). This strategy helps you trust who's calling by showing a clear, verified caller ID.

Performance Snapshot: Benchmarking Deepfake Detection

Looking closer at the technical details, finding deepfake audio is a tough job. Many people believe that 68% of Canadians think AI will eventually make scams impossible to detect (RBC 2026 Fraud Prevention Month Poll). This shows why we need strong detection methods that keep getting better.

Researchers are trying out different ways to "see" audio signals (like turning sound into visual charts) to get better at spotting fakes. We're talking about techniques like mel-spectrograms, log-spectrograms, scalograms, and Constant Q Transform (CQT) (FakeVoiceFinder Abstract). Each of these methods processes audio data differently, and understanding how they affect detection accuracy is key.

A single system for testing, like FakeVoiceFinder, is important for comparing these different technical approaches and making sure we keep making progress in this area.

Community Pulse: Widespread Anxiety and the Call-Blocking Reflex

Let's shift to how people are feeling. My look at what consumers are saying shows a lot of worry. 81% of Canadians feel 'there is a new scam to watch out for every single week' (RBC 2026 Fraud Prevention Month Poll). This constant flood of threats has made people think every call is a scam by default.

In fact, 83% say it is safest to assume any unexpected text, email, or call is a scam until proven legitimate (RBC 2026 Fraud Prevention Month Poll). These numbers show how often people run into, and sometimes fall for, these clever tricks. A worrying 40% of people admitted they spoke with someone on the phone before realizing it was a fraudster (RBC 2026 Fraud Prevention Month Poll). This really highlights how effective AI deepfakes are and how much we need better public awareness and tools.

Beyond the Headlines: Expert Perspectives and Future Outlook

While the 'State of the Call 2026' report provides crucial data, broader expert analysis sheds further light on the escalating deepfake crisis and its implications.

According to AI CERTs News, organized deepfake operations have transitioned from a mere novelty to an urgent threat, with enterprises now identifying industrial-scale synthetic media as one of their highest risks. They highlight Pindrop telemetry showing a staggering 1,300% increase in synthetic-voice incidents during 2024 alone, driven by low-cost tools that enable rapid voice cloning from less than 30 seconds of audio. This has led to significant financial pain for businesses, with a 2024 Regula survey indicating average losses near $603,000 per financial firm, and 10% reporting damages exceeding $1 million. The forecast suggests the deepfake ecosystem, including its defenses, will reach $7.27 billion by 2031, underscoring the economic urgency of this threat.

Cyble's analysis further emphasizes this alarming trend, noting that 2025 marked a pivotal moment with deepfake-as-a-service (DaaS) emerging as a rapidly growing tool for cybercriminals. Their Executive Threat Monitoring report indicates that AI-powered deepfakes were implicated in over 30% of high-impact corporate impersonation attacks in 2025. The financial impact is substantial, with U.S. financial fraud losses climbing to $12.5 billion in 2025, significantly boosted by AI-assisted attacks. Looking ahead to 2026, Cyble predicts an increase in hyper-realistic voice and video deepfakes for social engineering, real-time financial fraud challenging banking authentication processes, and a looming content verification crisis where organizations will struggle to differentiate authentic from AI-generated content.

These expert perspectives collectively paint a picture of a rapidly evolving threat landscape where AI is not just enhancing existing fraud but creating entirely new vectors of attack. The sheer scale, accessibility of tools, and the increasing realism of deepfakes demand a multi-faceted and continuously adaptive defense strategy that goes beyond current measures, emphasizing the critical need for both technological innovation and heightened human vigilance.

Alternative Perspectives & Broader AI Fraud Landscape

It's important to remember that AI fraud isn't just about voice scams. My research shows a wider range of tricks, including AI-generated fake emails or chats (60% of schemes reported by companies), fake documents (39%), and voice-clone calls pretending to be executives (24%) (KPMG 2026 Business Fraud Survey). This threat, coming from many different angles, is costing businesses a lot of money. In fact, 72% of Canadian businesses lost between 1% and 5% of their annual profits to AI-powered fraud attacks in the last year (KPMG 2026 Business Fraud Survey).

Beyond the immediate financial hit, the damage to a company's reputation from a fraud attack can be huge. Experts are warning that 'A single scam can shatter customer confidence, result in lost business, and leave lasting damage to a company's brand' (KPMG 2026 Business Fraud Survey). This means we need a full, smart plan for preventing fraud that goes beyond just technology. It needs to include good management, skilled people, and clear responsibility.

Practical Tips & The Path Forward

So, what can we do about it? Both individuals and companies need clear advice. Here are some excellent tips that are crucial for everyone:

- Pause when you feel strong emotions, like fear or excitement.

- Verify any unexpected requests by contacting the person or company through a trusted, independent channel (not the one the caller gives you).

- Watch out for personalized scams that use information about you that's publicly available.

- Use strong passwords, multi-factor authentication (MFA) for extra security, and transaction alerts to know when your money is moving.

This highlights the big need for clear, ongoing guidance and education, much like the defense strategies talked about in McAfee's Project Mockingbird: CES 2024 AI Audio Deepfake Defense, which also focused on giving people tools and knowledge.

The truth is, there's a big gap in what people know: 54% of Canadians admit they don't always know what they should be doing to protect themselves (RBC 2026 Fraud Prevention Month Poll). This shows how important it is to have clear, continuous guidance and education. It's not just about buying technology; it's about teaching people to use it well and updating our defenses as fast as the threats change.

My Final Verdict: Navigating the Deepfake Storm

The rise of AI deepfake voice scams has definitely created a big trust problem in voice communication. My investigation confirms that we need many different solutions, not just one magic answer. Just relying on one way to detect fakes or one set of tips simply won't work. The only way to avoid widespread financial and reputation damage is to have a full plan. This means combining smart detection technologies with everyone – individuals and organizations – being extra careful. We need to keep learning, adapting, and investing in strong, connected fraud prevention systems to get through this evolving deepfake storm successfully.

Frequently Asked Questions

-

How can I realistically distinguish an AI deepfake voice from a real one during a call?

While it's getting harder, try to listen for small oddities in how they speak, like strange pauses or repeating phrases. Always double-check unexpected requests by contacting the person or company through a trusted, separate way – don't use the contact info the caller gives you.

-

Beyond voice scams, what other AI-enabled fraud should businesses and individuals be most concerned about?

AI can also create very convincing fake emails/chats, fake documents, and calls where someone pretends to be a company executive. The main rule is to assume any unexpected message or call is a scam until you can independently confirm it's real.

-

What immediate steps can organizations take to combat the rising tide of AI deepfake fraud?

Companies should invest in AI tools that detect fakes, increase their budgets for preventing fraud, use branded calls so customers know who's calling, and constantly teach employees about new scam tactics and how to verify information.

Sources & References

Yousef S. | Latest AI

AI Automation Specialist & Tech EditorSpecializing in enterprise AI implementation and ROI analysis. Yousef holds a PhD in AI/Machine Learning and possesses over 10 years of experience in cybersecurity research, with a focus on deepfake detection. As a published researcher on deepfake detection algorithms, Yousef provides hands-on insights into what works in the real world.