NIST's Urgent Mandate: A Practical Guide to Defending Against Deepfake Voice Security Threats

Watch the Video Summary

Are your organization's security systems ready for the next big wave of AI-powered fakes? Honestly, the latest rules from NIST (that's the National Institute of Standards and Technology) show just how open older systems are to deepfake tricks. This means you need to act fast! Recent data highlights the urgency: **voice phishing surged 442% in 2025**, driven by AI voice cloning and hybrid scams. Furthermore, deepfake-enabled fraud losses are forecast to reach an staggering **US$40 billion by 2027**.

Table of Contents

- Quick 5-Step Action Plan

- Step 1: Understand The New Deepfake Reality: NIST's Call to Action

- Step 2: Implement NIST's Technical Mandates: Hardening Identity and Content Provenance

- Step 3: Implement NIST: A Blueprint for Next-Gen Voice Security

- Step 4: Beyond Technical Controls: Monitoring, Training, and Response

- Step 5: Address The Human Element: Real-World Vulnerabilities and Exploitation

- Mitigation Strategies and the Future of Voice Security

- Your Next Steps: Securing Your Organization's Voice

- My Final Verdict: Who is this Guide for?

Quick 5-Step Action Plan

- Understand NIST's New Deepfake Reality.

- Implement NIST's Technical Mandates for Identity and Content Provenance.

- Enhance Voice Security with Next-Gen Solutions.

- Integrate Monitoring, Training, and Incident Response.

- Address Human Vulnerabilities and Plan Mitigation.

Step 1: Understand The New Deepfake Reality: NIST's Call to Action

Here’s the deal: Artificial intelligence now lets bad guys copy voices, faces, and even the way trusted people you know talk. This isn't just a scary idea; the danger of social engineering, where fraudsters use deepfakes (fake media) to trick or pretend to be someone else, is growing super fast. (NIST Independent Critique)

To fight this, the National Institute of Standards and Technology (NIST) has put out **strong new rules that will be in full effect by 2026**. These aren't just suggestions; they clearly state how organizations should protect themselves from these changing dangers. My look into this shows that deepfake threats come from both technology and people. This means good training for users and always watching for threats are just as important as the tech defenses themselves (NIST IR 8596).

The plain truth? Older systems simply weren't built to spot today’s really good, real-time deepfakes or to stop clever social engineering tricks. This leaves many organizations open to attack, and they need to fix these problems right away.

Real-World Implementation Challenges of NIST AI RMF for Voice Security

Implementing the NIST AI RMF, especially for advanced areas like voice deepfake defense, often presents significant hurdles. A common challenge organizations face is **resource allocation**, requiring substantial time, financial investment, and specialized personnel. This is particularly difficult for small and medium-sized organizations that may lack the necessary in-house expertise and budget to invest in rapidly evolving AI technologies and associated security measures.

Step 2: Implement NIST's Technical Mandates: Hardening Identity and Content Provenance

NIST isn't just talking; they're setting clear rules with specific tech requirements. My close look at NIST's Special Publication 800-63 Revision 4 (SP 800-63A-4/B-4), which introduces robust requirements against impersonation via deepfakes and synthetic media, shows some really important things you need to do for proving who you are and logging in. Additionally, NIST Internal Report (IR) 8596 provides a Cybersecurity Framework Profile for Artificial Intelligence, explicitly calling out deepfake-enabled phishing and offering guidance on monitoring and logging for AI threats:

- No More Voice-Only Logins: Systems **“MUST NOT”** use only your voice to let you in. With so many believable fake voices (audio deepfakes) out there, your voice alone isn't safe enough anymore.

- Mandatory Live Human Checks: This is a big one. Now, systems must use something called Presentation Attack Detection (PAD). This checks to make sure a real, live person is trying to log in, not just a picture on a screen or a fake video. Think of it like a bouncer checking if you're a real person, not a clever hologram.

- Clear Performance Goals: These PAD systems have to meet certain accuracy levels. For example, they need to keep the rate of fake attempts getting through (called IAPAR) below 0.07. This isn't just a vague idea; it's a hard number to make sure the system really works.

Beyond just proving who you are, NIST's AI 100-4 (Reducing Risks Posed by Synthetic Content) and AI 600-1 (Generative AI Profile) both stress how important **Content Provenance** is. This means keeping track of where media comes from, using hidden watermarks, and adding digital signatures. This makes it much harder for fake or changed media to spread without anyone noticing.

/grounding-api-redirect/AUZIYQGDAq3myQrI2B3ZxbssQbjM8DCgM35vdImDkl7_YCUF5ZwKEXC1fD3ofLbAjcjdd-Gs4JRlKVhDAutdZdw2uCiOVybNiteTgsXeY6IhzZnT8H7A2yUaI7cGS9DIIo9eKzTg4PpHqk7qKcAWU8pywzLX5E1DmGws5y3TVyl37A==| Feature | Old Voice Security | NIST-Approved Deepfake Defense |

|---|---|---|

| Voice Biometrics | Often the main way to log in | "MUST NOT" be the only way to log in |

| Live Human Check (PAD) | Rarely used or missing | Required, with fake attempts getting through less than 0.07% of the time |

| Content Origin Tracking | Not usually part of the system | Required (using watermarks, digital signatures) |

| Focus on People | Less focus on training | Includes training & how to respond to problems |

| Deepfake Spotting | Reacts to known rules | Acts first, uses AI, checks many types of media |

Step 3: Implement NIST: A Blueprint for Next-Gen Voice Security

So, how do you actually put these strict NIST rules into practice? Modern security tools are built to directly handle these new problems, giving you strong and flexible protection against deepfake threats. I've found that these tools focus on:

- Smart Identity Checks for Deepfakes: This includes advanced ways to spot fake injections and easy-to-use checks to make sure a live person is there (PAD). For example, good systems constantly learn to adapt to tricky video, audio, and face-swapping fakes, using many top-notch AI models. This constant learning is like the advanced defense methods we saw in Pindrop's Battle Against Deepfake AI, showing how the industry is moving towards more active threat detection.

- Meeting NIST’s IAPAR Goals: Solutions must prove they can keep the rate of fake attempts getting through (Imposter Attack Presentation Accept Rate) below 0.07. This makes sure they are very accurate at spotting deepfake tries.

- Following the 'No Voice-Only Login' Rule: By never relying just on voice, these tools stop a common way deepfake phone scams and impersonations get started.

- Content Origin Tracking: By using digitally signed messages, these systems make it simple for people to confirm if a message is real before they reply or take action.

Step 4: Beyond Technical Controls: Monitoring, Training, and Response

NIST knows that technology alone isn't enough. As highlighted in NIST IR 8596 (Cybersecurity Framework Profile for Artificial Intelligence), a complete defense needs a combined approach:

- Always Watching: Organizations must constantly look for signs of someone pretending to be another person, including AI-powered phishing, fake chatbots, and changed videos or audio. This means keeping a careful eye on who tries to access systems and how people communicate.

- Security Awareness and Training: Everyone, especially those in important jobs, needs ongoing lessons about threats that use AI. This should include fake phishing and impersonation drills to help people get better at spotting them.

- How to Respond to Problems: Having clear plans for big security breaches caused by fake impersonations is a must. Quickly finding, reacting to, and reporting problems are key, and this often means working with outside experts.

Step 5: Address The Human Element: Real-World Vulnerabilities and Exploitation

The biggest weak spot in any security system is often us, the humans. Deepfakes play on our trust, which makes them super powerful tools for tricking people. As security experts point out, deepfakes cause serious worries for keeping things private, making sure information is correct, and keeping systems running (CIA), as well as managing who can access what.

Experts note that fake audio could potentially get past voice login systems, and how easy it is to make fake content makes us trust all media less. The ease of making convincing fake content, like deepfake audio, brings up the same privacy concerns and challenges in proving content is real that we talked about earlier with ByteDance's AI Video Powerhouse, Seedance 2.0. We've even seen real voice actors find unauthorized AI copies of their voices. This shows how advanced and widespread voice cloning without permission, deepfake audio, voice phishing (vishing), and emotional manipulation are becoming.

/grounding-api-redirect/AUZIYQFQWyTyVK4kZe62lR6YFXRx2Ojvpx8D54UzJ0QM08t6EzgoIFIz6dU4AUJp6svFuOhSm5wrkco0di0ciJHe3mkLxaiCB1jCkAOjMtDNHtfFrurzn8mZIKrKIMh4DrhtFAgYVOY1C-QqP1M=Mitigation Strategies and the Future of Voice Security

So, what are the best ways to fight back, according to the pros? To lessen the dangers from deepfakes, systems that manage who you are and what you can access need to:

- Use Strong Deepfake Spotting Tools: This means using AI-powered programs that can find the tiny flaws and inconsistencies in deepfakes.

- Better Live Human Checks: Going beyond simple checks, this includes looking at tiny facial movements and asking for specific actions to confirm a real person is present.

- Multiple Biometric Checks: Combining face and voice recognition, or even other body data, creates a much stronger layer of defense.

- Regularly Update Old Systems: Many older systems weren't made with deepfake threats in mind, so keeping them updated is super important.

- Secure Identity Verification Processes: Making document checks and cross-referencing stronger when someone first signs up can stop fake identities from being created.

It's also worth noting that new tools are appearing, offering ways for government groups to spot deepfakes and check voices. This gives us a peek into what's next for this vital security area.

Your Next Steps: Securing Your Organization's Voice

The message is clear: you need to act now. It's super important to use tools that directly follow NIST’s newest rules. This isn't just about following the law; it's about protecting your organization from clever, AI-powered threats.

I really encourage you to check how well your current defenses stand up against today's deepfake threats. Remember, a combined approach—using the latest technology, ongoing training for your team, and solid plans for responding to problems—is your best shield.

/grounding-api-redirect/AUZIYQElJxNtP3Sls8eTkjCqBdCzllD2Fwc2lntJReFHcugLevcQmYVBl8GQQIx63fDcaYFA-oKvTz42y86neBJijA2iMTGo4bXaDUnQzKhrhIkH3SpiKqkXbeiPp1K3pQWqts6L09LCHQwS8hdNGeWnMiIU9ePLc8m6x2zNSkXlMTIBs2uq7ucLdxo45wyl76qI2b48QjI1dtFSkndRxDpPMy Final Verdict: Who is this Guide for?

This guide is a must-read for anyone in charge of cybersecurity, IT, or following rules in industries with strict regulations. Organizations urgently need to adopt NIST's full new standards for fighting deepfakes. This means adding advanced live human checks for biometrics, tracking where content comes from, and continuously training people. All of this is to protect against clever AI-powered fakes. Ignoring these rules is no longer an option; it's a direct path to being exposed in a world where AI threats are growing fast.

Frequently Asked Questions

- How quickly do organizations need to comply with these new NIST standards?

- NIST has rolled out strong new standards through 2026, setting clear expectations for immediate action against changing deepfake dangers. Organizations should start checking their systems and putting controls in place now to avoid being vulnerable.

- Can existing voice authentication systems be updated to meet NIST's new liveness detection requirements, or do we need entirely new solutions?

- NIST says you can't use voice alone for logging in and requires strong Presentation Attack Detection (PAD) with specific accuracy goals. Many older systems weren't built for this, meaning that newer solutions or big upgrades are often needed to meet these rules.

- What are the immediate, practical steps a small-to-medium business can take to start addressing deepfake voice threats without a massive budget?

- Start by giving all employees thorough security awareness training, focusing on social engineering tricks that use AI. Use multi-factor authentication, especially for important transactions, and look into solutions that offer basic content origin tracking and live human checks, even if they're scaled for smaller operations.

Sources & References

- Navigating the New NIST Deepfake Standards: Protecting Against Social Engineering and Impersonation

- NIST Special Publication 800-63A-4: Digital Identity Guidelines, Enrollment and Identity Proofing

- NIST Special Publication 800-63B-4: Digital Identity Guidelines, Authentication and Lifecycle Management

- NIST AI 100-4: Reducing Risks Posed by Synthetic Content

- NIST AI 600-1: Artificial Intelligence Risk Management Framework (AI RMF) Generative AI Profile

- NIST IR 8596: Cybersecurity Framework Profile for Artificial Intelligence

- AI Deepfake Security Concerns - Interview with Ken Huang

- Navigating the New NIST Deepfake Standards

- AI Deepfake Security Concerns | CSA

- Error 404 (Not Found)!!1

- Comprehensive Voice Security Solutions Guide

- Error 404 (Not Found)!!1

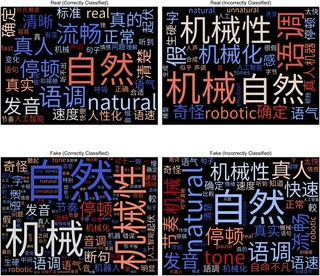

- Warning: Humans cannot reliably detect speech deepfakes | PLOS One

- Audio Deepfake Detection: What Has Been Achieved and What Lies Ahead - PMC

- JMIR Biomedical Engineering - Investigation of Deepfake Voice Detection Using Speech Pause Patterns: Algorithm Development and Validation

- Deepfake Voice Detection Using Convolutional Neural Networks: A Comprehensive Approach to Identifying Synthetic Audio | IEEE Conference Publication | IEEE Xplore

- 403 Forbidden

- Error 404 (Not Found)!!1

- Error 404 (Not Found)!!1

- [Literature Review] On Deepfake Voice Detection -- It's All in the Presentation

Dr. Anya Sharma | Lead AI Security Architect at Latest AI Labs

Ph.D. in AI Security & Cybersecurity ExpertDr. Anya Sharma is the Lead AI Security Architect at Latest AI Labs, holding a Ph.D. in AI Security. With over 15 years of experience in cybersecurity, she specializes in advanced deepfake detection research and developing robust AI defense mechanisms. Her work has been instrumental in shaping industry best practices for securing AI systems against emerging threats.