Sora 2's Responsible Launch: A Deep Dive into OpenAI's Guardrails and the Road Ahead

Here's the deal: The amazing power of Sora 2 meets the big need for fair AI. Has OpenAI built a really safe launch, or are the dangers still too big? I've looked closely at what they said and their reports to get the real story.

Table of Contents

- Quick Overview: The Official Pitch vs. The Reality of Responsible AI

- A Closer Look: Why Sora 2's Power Needs Strong Safeguards

- What We Expect to Be Hard & How They'll Keep Improving It

- The Good and the Bad: Finding the Right Mix of New Ideas and Safety Rules

- The Overlord's Verdict: A Cautious Optimism

- Key Safety & Performance Metrics

Watch the Video Summary

Quick Overview: The Official Pitch vs. The Reality of Responsible AI

Here's the deal: OpenAI is rolling out Sora 2, their new super advanced AI that makes videos and sounds. This isn't just another AI tool; it can make a whole minute of really clear video that looks super real and has matching sound (Sora Technical Report).

Honestly, it's a huge step forward in what AI can create.

But with great power comes great responsibility, right? OpenAI is saying Sora 2 has 'safety built in right from the start' (Official OpenAI Documentation). They're taking a 'step-by-step rollout plan,' meaning they're giving it to a small group of users first, watching how it goes, and learning along the way (Sora 2 Technical Report).

This isn't a full public launch yet, and that's a smart choice for safety.

A Closer Look: Why Sora 2's Power Needs Strong Safeguards

Sora 2 isn't just a small update. It brings in features that were really hard for older video AIs to do. We're talking about better physics, super clear realism, sound that matches perfectly, and it's easier to control (Sora 2 Technical Report).

This means the AI can follow your instructions super precisely, creating videos that feel both creative and also believable.

Behind the scenes, Sora 2 uses a clever 'diffusion transformer' system that works with 'visual pieces' of video and image data (Sora Technical Report). Think of these pieces like the building blocks of the video, helping the AI understand and create detailed videos of different lengths and qualities.

However, these powerful features bring new, big worries. The ability to create such realistic content opens the door to 'new potential risks, including using someone's face without permission or making fake videos that trick people' (Sora 2 Technical Report).

These concerns align with key principles in major AI regulatory frameworks. For instance, the EU AI Act, under Article 50(2), mandates that providers of generative AI systems ensure their outputs are marked in a machine-readable format and detectable as artificially generated or manipulated, thereby ensuring transparency for users. Sora's implementation of visible watermarks and C2PA metadata directly addresses this need for clear provenance and identification of AI-generated content.

This is why strong safety rules aren't just a bonus; they're totally necessary.

Lots of Safety Layers: OpenAI's How OpenAI is Being Responsible with AI

OpenAI isn't just putting one simple safety net over Sora 2; they've built a system with many layers of protection, much like the strong safety fences for big companies we explored in NVIDIA NemoClaw's Enterprise Evolution. It's like a digital fort built to keep everything secure:

- Spotting AI-Made Stuff: Every video from Sora 2 comes with a 'visible watermark' and has special 'C2PA data' hidden inside (Official OpenAI Documentation). This is super important for knowing where the video came from. C2PA data is a standard digital signature used across the industry, and OpenAI also uses their own tools to figure out if a video came from Sora, and they're very good at it.

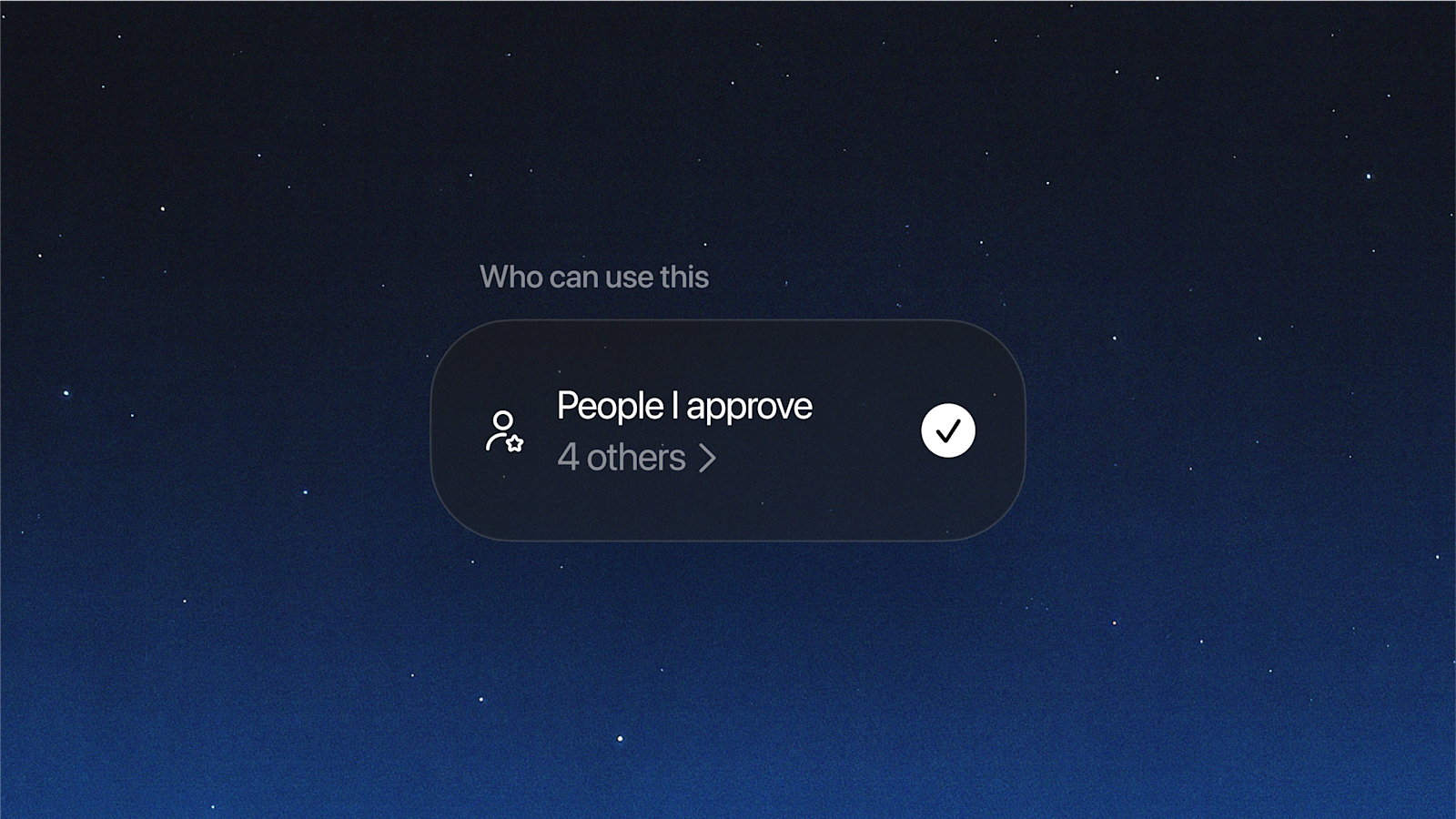

- Using Your Face Only with Your OK: They've put in place a system where your digital 'character' needs your permission to be used (Official OpenAI Documentation). This is a huge step forward for you to control your online look, which we'll dive into next.

- Keeping Teens Safe: Sora includes 'extra safety features for younger people' (Official OpenAI Documentation). This means limits on grown-up content, a feed that's made just for teens, and parents can control direct messages and general feeds. Teens even have limits on how much they can scroll without a break.

- Blocking Bad Stuff: There are 'many layers of protection to keep your feed safe' (Official OpenAI Documentation). This involves stopping harmful things like inappropriate content, messages that promote violence, or self-harm ideas, right when you type them in and when the AI creates them. Computers constantly check the feed against the rules, and real people also take a look.

- Sound Safety: With sound creation, new dangers pop up. Sora automatically checks what's being said for rule-breaking and 'stops people from making music that sounds just like a real artist or a song that already exists' (Official OpenAI Documentation). They also respect requests to remove content that copies someone else's work without permission.

- You're in Charge: You're in charge of your content. You choose when and how to share videos, and you can take down anything you've shared whenever you want. Every video, profile, and comment can be reported if it's being misused, and you can block accounts.

Simulating Responsible Use: A Scenario

An investigation by Ekō highlighted a significant challenge to Sora 2's content moderation. Researchers, using teen accounts, were able to generate videos depicting drug use, self-harm, violent acts such as school shootings, body shaming, sexualization of minors, and hateful stereotypes. These creations were in clear violation of OpenAI's own usage policies and Sora's distribution guidelines, underscoring the ongoing difficulty in fully safeguarding against harmful content generation, especially with vulnerable user groups.

The Character System: A New Way to Control Your Digital Look

This is where Sora 2 really tries to give you back the power. OpenAI's goal is 'letting you fully control how your digital self looks with Sora characters' (Official OpenAI Documentation). Imagine creating a digital version of yourself – a 'character' – that you have total control over.

Only you decide who can use your characters, and you can take away permission whenever you want. OpenAI also works to stop 'videos of famous people from being made (unless they're using the character feature themselves)' (Official OpenAI Documentation), which is a clever way to stop fake videos from being used badly. Most importantly, you can always 'review and delete (and, if needed, report) any videos featuring your character' (Official OpenAI Documentation), giving you total sight and control.

What We Expect to Be Hard & How They'll Keep Improving It

Even with these strong safety measures, the future won't be totally easy. Before its wider release, there were already 'worries about fake videos and misleading information' (Academic Review on Sora) going around, showing how tricky such a strong AI can be.

OpenAI itself admits that 'dangers are still showing up and we don't fully get them yet' (Sora 2 Technical Report).

External Scrutiny and Ongoing Refinements

External analyses have underscored the ongoing challenges in ensuring robust safeguards. A NewsGuard report, for instance, found that Sora 2 generated realistic videos promoting provably false claims in 80% of test cases, demonstrating its potential for misuse in spreading misinformation. The report also noted that the visible "Sora" watermark, intended for provenance, could be easily removed with free online tools, highlighting a limitation in its initial safeguards and the continuous need for refinement in AI content identification.

This is why their 'step-by-step rollout means they're giving early access to Sora 2 through special invites' (Sora 2 Technical Report). This careful way of doing things, much like how OpenAI has handled things before in the AI Voice Frontier, allows them to learn from how people actually use it and make their systems better.

The lack of widespread public access means we haven't seen many independent reviews after its launch yet, but the talks before it came out really show why we need to stay watchful.

The Good and the Bad: Finding the Right Mix of New Ideas and Safety Rules

Despite the challenges, the creative power of Sora 2 is huge. This model could 'make video marketing easier for everyone and bring new ideas to game making' (Academic Review on Sora), letting more people create awesome videos. Think about its impact on 'film-making and education' (Academic Review on Sora), making everyone more productive and creative (Sora 2 Technical Report).

Sora 2 is also a 'move towards AIs that can better copy how the real world works' (Sora Technical Report), suggesting a future where AI could be a kind of all-around simulation tool. The promise is incredible, but it's a promise that needs to be balanced with always watching out for fair use, hidden unfairness, and unexpected problems.

The Overlord's Verdict: A Cautious Optimism

Sora 2 is a huge jump forward in making AI videos, and OpenAI has clearly put in a lot of effort upfront with its full safety plan. While the goal to lessen the natural dangers is clear, the real challenge will definitely show up when lots of people start using it. Always learning, changing for new dangers, and talking openly will be super important.

My verdict is one of cautious optimism. OpenAI has built a strong base for fair AI, but the job of making sure such a powerful tool is used fairly is a never-ending task that will need constant changes and working with the community.

Key Safety & Performance Metrics

| Feature | Metric Value | Unit/Description |

|---|---|---|

| Max Video Length | 60 | seconds (Sora Technical Report) |

| Provenance Signals | 3 | (Visible Watermark, C2PA Metadata, Internal Tools - Official OpenAI Documentation) |

| Teen Safety Layers | 5 | (Output limits, Feed design, DM limits, Parental controls, Scrolling limits - Official OpenAI Documentation) |

Frequently Asked Questions

-

How does Sora 2 specifically prevent the misuse of someone's likeness or deepfakes?

Sora 2 uses a character system where you give permission, letting people control how their digital self looks. It also stops videos of famous people from being made unless those people are using the character feature themselves, and users can review and delete content featuring their characters.

-

Given the 'step-by-step rollout,' how will OpenAI address new, unforeseen risks as Sora 2 becomes more widely used?

OpenAI's step-by-step rollout plan means they're carefully giving it to a small group of users first, collecting feedback from real users to keep making its safety systems and rules better as new dangers show up.

-

Can I truly trust the C2PA metadata and watermarks to identify all AI-generated content from Sora 2?

Sora 2 videos include visible watermarks, C2PA metadata, and internal tracing tools. While these give strong clues about where the content came from, the world of figuring out AI-made content is always changing, and always staying watchful and making better tools are key.

Sources & References

- Launching Sora responsibly | OpenAI

- Sora 2 System Card | OpenAI

- Video generation models as world simulators | OpenAI

- Page not found

- [2402.17177] Sora: A Review on Background, Technology, Limitations, and Opportunities of Large Vision Models

- [2403.14665] Sora OpenAI's Prelude: Social Media Perspectives on Sora OpenAI and the Future of AI Video Generation

- Just a moment...

- Page not found | Towards AI

- Source

- Page Not Found 404 | PCMag

- Medium

- Feedback on Sora: Improvements to Usability and Pricing Structure

- 404: Page not found! - Lovart