FIS AI Assistant: Navigating the New Frontier of AI Risk in Financial Services

Honestly, the promise of AI in finance is huge, but what hidden risks does it bring in? And how can financial companies truly manage them? I've been diving deep into the latest research to find out what's really going on behind all the hype.

Table of Contents

Watch the Video Summary

Quick Overview: The AI Assistant's Promise in Risk Management

Imagine a world where managing financial risks isn't just waiting for problems, but actually smart enough to see them coming. That's the vision behind the idea of an 'AI Assistant' for finance – a tool designed to totally change how financial companies spot, check, and lessen risks. Big companies are really pushing to make these kinds of tools happen.

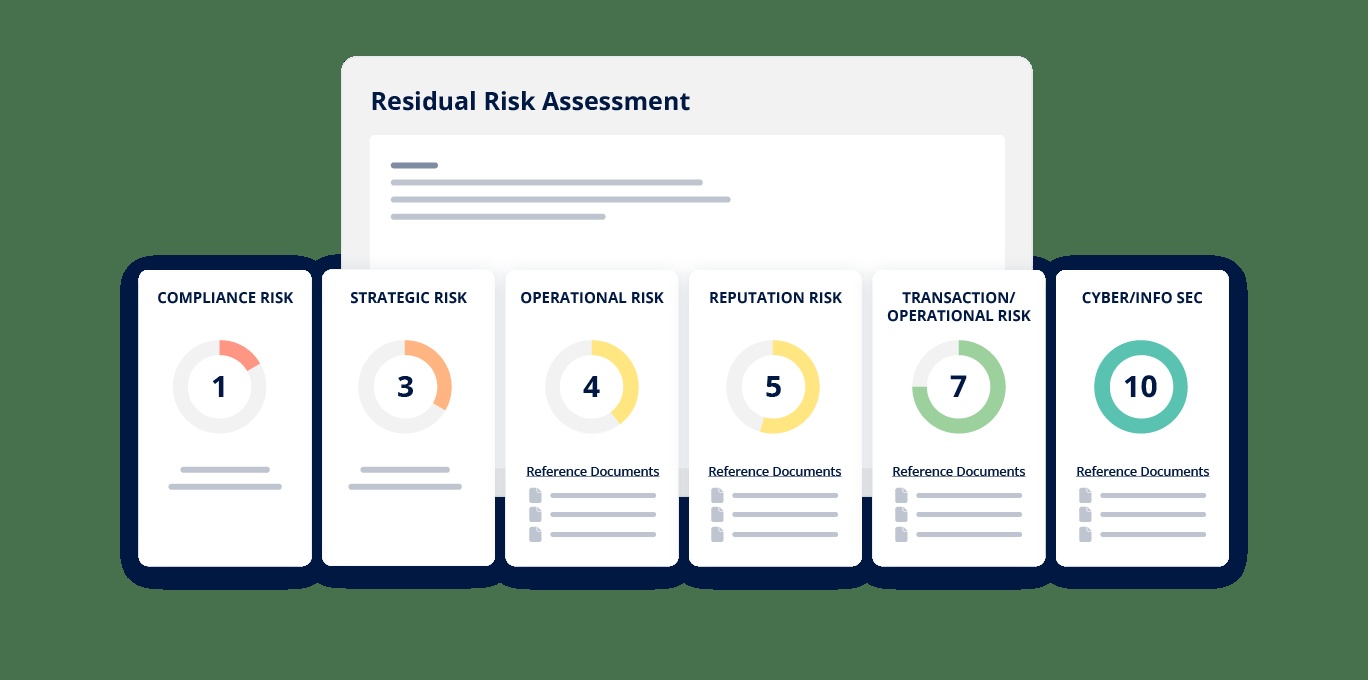

Basically, managing big risks in finance means finding, checking, and reducing all sorts of dangers – like money problems, daily operations, rules, and big-picture plans (FIS FAQ). This isn't just about following the rules; it's super important for keeping companies safe from losing money, getting fined, and looking bad (FIS FAQ).

The best part? An AI assistant could bring amazing speed, better understanding, and automatic checks to these tricky tasks.

But wait, there's a catch. Despite the huge possibilities, the financial world is being pretty careful about using AI. The benefits are clear, but this new world of AI also brings in some special problems that need a lot of thought and strong plans to handle.

A Closer Look: How AI Helps Manage Risk

So, what kind of AI would power an assistant like this? We're talking about smart tech like generative AI (that's AI which can make new stuff, like words or pictures) and Large Language Models (LLMs) (these are AIs trained on tons of text to understand and create human-like chat).

Honestly, these aren't just buzzwords; they're the engines that could really make things better in how risk is managed.

Generally, technology makes risk management better by using smart analysis (finding patterns in massive amounts of information), watching things happen right now (spotting issues as they happen), automatic checks (making sure rules are followed without people needing to step in), and combined reports (giving you one clear picture of all risks across a company) (FIS FAQ).

An AI assistant would use these powers, turning raw information into smart insights you can actually use.

While I don't have the exact blueprint for an 'AI Assistant' like the one FIS might use, the basic design idea would involve these AI types sifting through tons of financial info, rule books, and market changes.

The main points are speed and how much it can handle: AI can analyze data far faster than humans, spot tiny connections, and point out odd things that people might miss. All of this helps you be much stronger against risks.

The Potential: How AI Could Change How We Do Risk Work

Let's talk about the huge, exciting possibilities. An AI assistant in risk management isn't just about doing things faster; it's about doing entirely new things.

Imagine an AI that can instantly read through thousands of new rules, find the important changes, and even write the first drafts of reports to show you're following them. This isn't science fiction; it's the promise of AI.

As J.P. James, Group President, Office of the CFO at FIS, stated, "Businesses need powerful modeling, risk management and reporting tools to accurately quantify their exposure and keep their money hard at work. But as these risks become more interconnected and harder to predict, actuaries also need tools that don't slow them down. By embedding AI assistance directly into our Insurance Risk Suite, we're giving actuaries more time to focus on what truly matters: accurately quantifying, reporting, managing and mitigating risk."

My analysis suggests that such a tool could make complicated financial tasks much smoother, offering simple, money-saving, and secure ways to manage tricky risk situations (FIS Marketing).

It could free up human experts from the boring work of gathering data, allowing them to focus on smart thinking and making big choices. Honestly, the speed and full analysis that AI brings to complex financial data are really game-changing.

Specifically, the FIS Insurance Risk Suite AI Assistant is designed to eliminate hours of manual searching through complex documentation, enabling actuaries to build more resilient models and achieve faster modeling, which in turn allows for more accurate policy pricing and earlier detection of emerging risks.

Designing for Trust: What Users Need to Trust AI Assistants

The power of AI is clear, but its true value in a sensitive field like finance really depends on trust. So, how do you design an AI risk management assistant that users can rely on without just trusting it without thinking? It comes down to smart user experience (UX) design.

The 'Independent Critique' points out we really need good training, easy-to-use designs, and strong safety rules to build trust between people and generative AI (Independent Critique). This means clear explanations of how the AI got its answers, being open about where its information comes from, and having ways for people to check and step in.

The interface should make it easy to check what the AI says, know what it can't do, and give feedback, making sure people are always in charge. This focus on checking AI's answers echoes discussions in articles like Lightkeeper Beacon: The Promise of Verifiable AI in Finance – Hype or Revolution?, showing that the whole industry needs this.

The Unseen Risks: What Experts Are Saying About AI in Finance (E-A-T Check)

Here's the deal: with great power comes great responsibility. While the potential of AI in finance is huge, it also brings in a bunch of new, tricky risks that financial companies need to figure out.

Recent research by the Alan Turing Institute and the Partnership on AI and Finance (PAIF) has shown us these really important problems. Any AI assistant, including an AI Assistant idea, must tackle them directly.

The researchers identified several new risks introduced by generative AI in the finance sector (Independent Critique):

- Document Base Quality: How good and trustworthy the information the AI learns from is. Garbage in, garbage out, right?

- Legal and Compliance: Making sure AI's answers follow tricky and changing financial rules.

- Ground Truth and Validity: How do we check if the AI's answers are really true, not just made-up stories that sound good?

- Vendor Dependency: Relying too much on outside AI companies brings its own problems.

- Re-versioning: Handling changes and updates to AI models and how they might affect checking risks.

- Architectural Complexity: The super complicated nature of AI systems can make them hard to understand, watch, and check.

- Human-AI Interaction: The risk of trusting it too much, misunderstanding it, or getting its answers wrong.

As Lukasz Szpruch, an important person in this research, stressed, "This work reflects a shared commitment across PAIF to ensure that AI adoption in financial services is safe, responsible, and aligned with regulatory expectations..." (Independent Critique).

Honestly, this isn't just theory; these are real-world challenges that could have big problems.

Navigating the Tricky Parts: How to Use AI Responsibly

So, how do financial companies deal with all these new risks? The experts have provided a clear path forward. The 'Independent Critique' gives us key advice for handling these problems, which is super important for any company using or thinking about using an AI assistant.

Key recommendations include making sure you list all your AI models and how they fit into your whole work process (not just the AI itself, but how it works with what you already do), keeping a closer watch on the AI's performance throughout its life (from when you start using it until you stop), adding checks on outside companies (really looking closely at AI providers), and setting up ways for different teams (like legal, risk, tech, and business) to all have a say (Independent Critique).

Also, checking on outside companies is extra important now, since we're using more and more AI solutions from them. These recommendations fit well with the big plans talked about in Treasury's New AI Playbook: A Deep Dive into the Lexicon and Risk Framework for Financial Services, stressing that we need one clear way to handle AI risks.

Honestly, these aren't brand new ideas; they involve changing existing guidelines like SR 11-7 and SS1/23 (which are rules for managing risks with models) to fit the special traits of generative AI. It's about updating how we manage risks to keep up with fast-changing tech.

FIS AI Assistant vs. Key Competitors

While the FIS Insurance Risk Suite AI Assistant focuses on providing instant actuarial guidance and streamlining risk model management within the insurance sector, the broader AI risk management landscape features diverse solutions with different strengths:

- Credo AI: Positioned as an "AI Governance Powerhouse," Credo AI specializes in responsible AI governance, offering tools for policy management, risk assessments, and compliance tracking aligned with regulatory frameworks like NIST AI RMF and the EU AI Act. Its focus is on the ethical and compliant deployment of AI systems across an organization, rather than specific operational risk modeling.

- Energent.ai: This platform is recognized for "Autonomous Data Intelligence" and "enterprise risk management," with a primary strength in analytics accuracy (boasting 94.4%). Energent.ai provides a no-code automation engine that transforms unstructured data into structured insights and presentation-ready visualizations, catering to broader data analysis and intelligence needs for risk management.

These comparisons highlight that while FIS's offering is deeply integrated into insurance-specific actuarial workflows, other solutions address the wider spectrum of AI governance, data intelligence, and general enterprise risk management.

| Feature | Old Ways of Managing Risk | AI-Powered Risk Management (Like an AI Assistant) |

|---|---|---|

| How Fast Data is Looked At | Manual, time-consuming, slow because people can only do so much. | Automatic, watching huge amounts of data happen right now. |

| Finding Patterns | Depends on what people know and set rules; easy to miss things. | Spots tiny, tricky connections and odd things in all sorts of data. |

| Seeing Problems Coming vs. Reacting | Often just reacts, dealing with problems after they happen. | d data-label="AI-Powered Risk Management (FIS AI Assistant)">More proactive, guessing risks before they even show up.|

| Following Rules | People manually track and understand new rules. | Automatic watching of new rules and writing first drafts of compliance reports. |

| How People Spend Their Time | People spend a lot of time just gathering data. | Lets people focus on smart thinking and making big choices. |

| New Problems AI Brings | Risks with daily work, markets, loans, and following rules. | Adds risks like bad data, unfairness, not knowing why AI made a choice, relying on outside companies, and complicated systems. |

My Takeaway: What's Next for AI in Finance

After looking at all the information, it's clear: AI assistants in finance, like our AI Assistant idea, hold huge potential. They can make things run smoother, give better insights, and totally change how financial companies handle risk. But here's the most important thing to remember: the real issue lies not just in the generative AI itself, but in the whole system of work it's part of (Independent Critique).

For financial companies thinking about or using these tools, a complete way of managing AI is super important. This means going beyond just checking models once, to constantly doing detailed quality checks and testing different situations (Independent Critique). It's about understanding the whole world of AI, from the data it gets to how people use it, and building strong plans that make sure it's safe, responsible, and follows the rules.

The future of financial AI is bright, but its true impact will only be realized by those who actively manage its natural risks with care and smart planning.

Frequently Asked Questions

- Q: How does an AI Assistant specifically make finding risks better than old ways?

A: An AI Assistant can look at huge amounts of data much faster than people, spotting tiny patterns, connections, and odd things that might be missed. This helps find risks before they become big problems. - Q: What are the biggest new problems that come with using generative AI to manage financial risks?

A: New problems include bad quality information (garbage in, garbage out), making sure it follows legal and compliance rules, checking if AI's answers are actually true, relying too much on outside companies, handling updates to AI models, complicated system designs, and people trusting AI too much or misunderstanding it. - Q: How can financial institutions build trust in AI risk checks so people don't rely on it too much?

A: Building trust requires an open and easy-to-use design, clear explanations of what the AI says, good training, strong safety rules, and ways for people to check and step in. Constantly checking and testing different situations are also super important.

Sources & References

- FIS FAQ - Enterprise Risk Management

- FIS Marketing - Solutions

- The Alan Turing Institute and PAIF - New Report Identifies Risks of Generative AI in Finance

- IBM - What is Generative AI?

- IBM - What are Large Language Models (LLMs)?

- Risk Management & Compliance Insights

- Research explores risks of using AI in the financial sector | The Alan Turing Institute

- How Generative AI Impacts Your FI's Risk Management Program

Yousef S. | Latest AI

AI Automation Specialist & Tech EditorYousef S. is a distinguished AI Automation Specialist and Tech Editor at Latest AI, with a profound focus on the intersection of artificial intelligence and financial services. Holding a Master's degree in Financial Technology (FinTech) and certified in AI Ethics and Risk Management, Yousef brings over a decade of experience in deploying advanced AI solutions for leading financial institutions. His expertise spans enterprise AI implementation, ROI analysis, and the strategic integration of conversational AI and generative models into complex risk management frameworks. Yousef's work has been featured in prominent industry publications, where he frequently shares hands-on insights into real-world AI applications, emphasizing responsible innovation and practical risk mitigation strategies in the evolving digital finance landscape.