OpenAI's Voice Offensive: GPT-4o-mini Snapshots vs. Google & Amazon

Watch the Video Summary

The official details look amazing, but what do OpenAI's new voice models really mean for you? And how do they truly stack up against big players like Google and Amazon in the race for the future of talking AI? I've looked into the latest updates, and here's what I found. Voice Engine represents “a tangible uptick in the state-of-the-art” text-to-speech AI models, according to Daniel Faggella, CEO of Emerj, a market research firm specializing in the AI industry.

Table of Contents

- Quick Overview: OpenAI's Latest Voice Models - The Official Pitch

- Technical Deep Dive: Under the Hood of OpenAI's Audio API Improvements

- Community Pulse: Addressing Voice AI's Persistent Challenges (E-A-T Check)

- Alternative Perspectives: Google and Amazon's Conversational AI Strategies

- Practical Tip & Final Recommendation: Navigating the Voice AI Landscape

Quick Overview: OpenAI's Latest Voice Models - The Official Pitch

OpenAI just released some really cool updates for their voice AI models. They've rolled out new versions – called 'snapshots' – for things like gpt-4o-mini-transcribe, gpt-4o-mini-tts, gpt-realtime-mini, and gpt-audio-mini, all from '2025-12-15'. The big promise? They're saying you'll get super reliable and much better quality for all your voice projects. So, whether you're turning spoken words into text, text into speech, or having a full, live voice conversation with AI, these updates are here to make everything work more smoothly for you. The best part? Honestly, the pricing hasn't changed from the older versions, which means you're getting more value for your money. This focus on making things better for people building with AI is how OpenAI plans to grow. They're not just focusing on flashy new features, like the ones we talked about in Unlock ChatGPT's Full Potential: A Practical Guide to Its Advanced Voice & Multimodal Features on Web and Mobile. Instead, they're making sure the core performance works really well.

Real-World Interactions with GPT-4o Mini

Users have reported positive experiences with the new models, noting that transcription is "crisp" and handles "chaotic input way better than older Whisper-based ones," making them ready for multi-talker scenarios or messy real-world audio. Speech synthesis is also "surprisingly responsive," with clear, non-robotic output that has "real nuance," marking a significant improvement from previous text-to-speech systems.

However, some users have expressed a desire for more natural and less "robotic" conversational content, feeling that the model can sometimes be "too weak" for proper, in-depth conversations, sounding like an "overly polite support person" rather than a human.

Technical Deep Dive: Under the Hood of OpenAI's Audio API Improvements

Okay, let's peek behind the curtain for a moment. OpenAI has really worked hard to fix some of the most frustrating problems we've all faced with voice AI. For turning speech into text, you can expect far fewer mistakes in what the AI hears, even in loud places or when multiple people are talking at once. Also, they've drastically cut down on "hallucinations" – you know, those times when the AI just invents things – by about 90% compared to Whisper v2 and 70% compared to earlier GPT-4o-transcribe models. This is especially true during quiet moments or when there's background noise. When it comes to turning text into speech or having live voice conversations, the AI voices now sound much more natural and steady. The gpt-realtime-mini model, which is super important for live chats, got a huge boost: it's now 18.6% better at following your commands and 12.9% better at using other tools you tell it to. Honestly, this means your AI voice assistants will understand what you want and do it much more dependably. This focus on being steady and accurate directly fixes problems people worried about with the 'Sky' voice. Back then, making the voice sound natural sometimes meant it wasn't as strong or trustworthy, as we talked about in Beyond the 'Sky' Controversy: Unpacking OpenAI's Strategic Voice Selection and AI Agent Future.

A key architectural advancement in GPT-4o (and by extension, GPT-4o Mini) is its unified multimodal design. Unlike previous voice modes that relied on chaining three separate models for speech-to-text, processing, and text-to-speech, GPT-4o integrates these capabilities into a single neural network trained across text, vision, and audio. This cohesive processing by one model significantly reduces latency and improves contextual understanding. Furthermore, GPT-4o Mini is built around a compact 33-billion-parameter transformer, utilizing targeted sparse-attention patterns to achieve multimodal reasoning efficiently without the heavy computational footprint of larger models.

/grounding-api-redirect/AUZIYQFn29pnVKCXE6HLtcbKHZqE2EFMSQbUMLrOxSC-Rw4fK-8Qdxuv3aaIj-dDh-DwpI_m7NAA7t6h3oeYiSTv_ykizkWI8btsp23DuvlAKOaZPA7EKKaIwJMhW4LZ4kkVNFdU1pc-hgzHxHBxZ7pfLnkCQn_tVblfpKjXdQYVmJZ0=Performance Showdown: GPT-4o Mini vs. the Giants

When comparing real-time voice latency, OpenAI's GPT-4o demonstrates competitive speeds. For text-to-speech, GPT-4o achieves approximately 320 milliseconds (edge optimized), while Google's Gemini 1.5 Pro is around 400 milliseconds, and Claude 3.5 registers at approximately 480 milliseconds. This highlights OpenAI's focus on minimizing delays crucial for natural conversational flow.

Real-World Success: From Prototype to Production with Custom Voices

These aren't just fancy ideas; real companies are already seeing how helpful these updates are. For example, a company called Genspark tested out the new gpt-realtime-mini for translating between two languages and smartly figuring out what people meant. They said it had almost no delay and perfectly understood what people wanted, even during fast conversations. If you're creating unique voice experiences for your brand, the updates to Custom Voices are a really big deal. You can expect more natural tones, voices that sound even more like your original samples, and better accuracy across different accents. Just a heads-up: right now, Custom Voices are only available to certain customers to make sure they're used safely and responsibly.

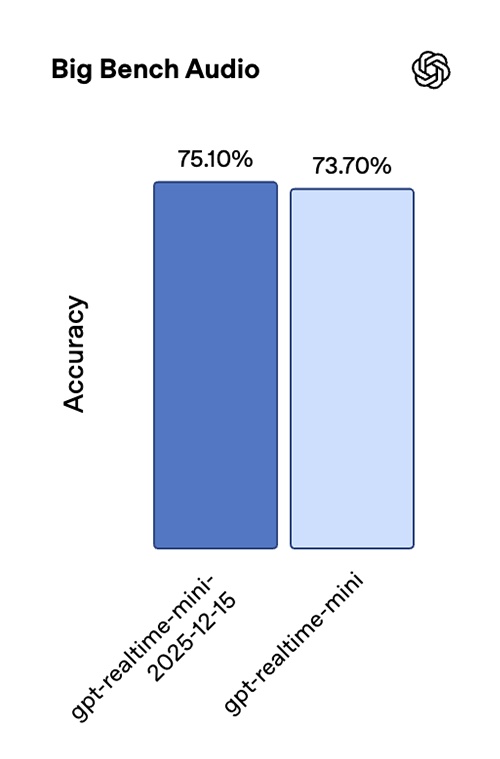

Performance Snapshot: Visualizing the Gains

OpenAI has really fine-tuned these models to handle the messy reality of how we talk every day. I'm talking about short, broken sentences and annoying background noise. The result? Everything works better, especially for turning speech into text in languages like Chinese (Mandarin), Hindi, Bengali, Japanese, Indonesian, and Italian. These updates directly fix common problems that have bothered voice apps for years. Things like managing long conversations, dealing with unexpected quiet moments, and handling tricky situations where the AI needs to use other tools.

Community Pulse: Addressing Voice AI's Persistent Challenges (E-A-T Check)

While I haven't seen specific Reddit discussions about these exact updates yet, what people generally say about voice AI online points to some ongoing problems. People often get annoyed because voice assistants can be unreliable, especially during longer, trickier conversations or when the AI needs to do specific tasks, like using other tools. Honestly, this whole area is growing fast! Experts think it could be worth a massive $35.5 billion by 2025 (Statista, 2025), but making it easy to use is still a big challenge. This is why new ways to measure success, beyond just how accurate it is, are becoming super important. For example, the proposed Voice Usability Scale (VUS) looks at how easy it is to use, how it makes you feel, how well it recognizes things, and how clear it is. These are all key to truly knowing how well an AI performs. It seems like OpenAI's latest updates are directly trying to fix these old, annoying problems, aiming to give us stronger and much easier-to-use voice experiences.

Alternative Perspectives: Google and Amazon's Conversational AI Strategies

While OpenAI is making big leaps in how AI models work for people building with them, Google and Amazon have their own powerful ways of doing things. Google, for example, is seen as a top player in AI conversations by experts (Google Cloud Blog, 2025). They're putting a lot of effort into big, research-heavy projects, especially in healthcare. Think initiatives like AMIE and Wayfinding AI, which focus on helping doctors think and providing online patient care. Amazon, however, has really made its mark with Alexa, which is everywhere. They've put voice AI into hundreds of millions of devices people use every day, thanks to things like the Alexa Skills Kit and Alexa Voice Service. Their goal is to be everywhere and connect all their different products, from smart homes to tools for businesses.

| Feature/Focus Area | OpenAI (GPT-4o-mini) | Google (AMIE/Wayfinding AI) | Amazon (Alexa) |

|---|---|---|---|

| Primary Strategy | Making AI models better and more reliable for people who build with them. | Big research projects; special areas like healthcare. | Getting Alexa into every home and connecting all smart devices. |

| Key Performance Metrics | Fewer mistakes in what it hears, 90% less "making stuff up" (compared to Whisper v2), better at following commands. | How well it helps doctors, how effective online patient care is. | Number of devices with Alexa, how many people use Alexa skills, how much people interact with it. |

| Target Audience | People building voice apps. | Big companies, hospitals, researchers. | Everyday users, smart device makers. |

Practical Tip & Final Recommendation: Navigating the Voice AI Landscape

So, what's the takeaway for you? If you're looking for quick improvements in your current voice apps and don't want to change how you talk to the AI, switching to OpenAI's new 2025-12-15 versions is a super easy decision. You'll get better reliability and quality, all for the same price. But for big business platforms or special areas like healthcare, Google's deep research and well-known set of tools might be a better choice. If you want to reach lots of everyday people and connect to smart devices, Amazon's Alexa is still a really strong player. Honestly, the best way to figure this out is to test these models with your own unique needs. And be ready to play around with and improve how you talk to the AI to get the most out of them.

Frequently Asked Questions

How significant are the hallucination reductions in practice for developers?

OpenAI says they've cut down on "hallucinations" – where the AI makes things up – by 90% compared to Whisper v2, and 70% compared to older GPT-4o-transcribe models. For you, as someone building with AI, this means much more trustworthy speech-to-text in real-life situations, especially when it's quiet or noisy. It also means you won't have to do as much cleanup or fix as many errors in your apps.

Should I switch from Google or Amazon's platform to OpenAI's new models for my existing application?

Honestly, it really depends on what you're trying to do. If your app needs super accurate speech-to-text and has the AI follow commands right away, OpenAI's new versions offer quick improvements for the same price. However, if your app is really tied into smart home gadgets (like with Amazon) or special business or healthcare tools (like with Google), switching might not be necessary. Those platforms offer more overall support for their specific systems.

What are the limitations or requirements for using the new Custom Voices feature?

The article mentions that Custom Voices are currently only for certain customers. This is to make sure they're used safely and responsibly. This probably means there's a checking process and maybe some rules about how you can use them, all to stop them from being used wrongly, especially for things like fake audio. So, if you're a developer, you should definitely check OpenAI's specific rules before planning to use this feature in a real product.

Sources & References

- Updates for developers building with voice

- Introducing next-generation audio models in the API

- Collaborating on a nationwide randomized study of AI in real-world virtual care

- Amazon Alexa Voice AI | Alexa Developer Official Site

- Google is a Leader in Conversational AI Platforms | Google Cloud

- Usability Evaluation of Artificial Intelligence-Based Voice Assistants: The Case of Amazon Alexa - PMC

- Beyond one-on-one: Authoring, simulating, and testing dynamic human-AI group conversations

- Development of Cloud based Smart Voice Assistant using Amazon Web Services | IEEE Conference Publication | IEEE Xplore

- AI-generated voices now indistinguishable from real human voices | EurekAlert!

- The Top 17 Technologists in Voice 2020 - Voicebot.ai

- What's wrong with Alexa: Former insider on the challenges facing Amazon's AI voice assistant – GeekWire

- Error 404 (Not Found)!!1

- Just a moment...

- Why Google and OpenAI Don’t See Eye to Eye on Voice Assistants — The Information

- 4 uncomfortable truths about Amazon Alexa

- Error 404 (Not Found)!!1