Claude Opus 4.6: Anthropic's Agentic Leap Forward, But Not Without Its Quirks

Anthropic has a new AI called Claude Opus 4.6, and it's supposed to be a game-changer for tough tasks. But is it really as good as they say? Or are there some hidden problems when you try to use it in real life? I've looked at all the official news, the tech details, and, most importantly, what real people are saying online to give you the honest truth.

Quick Overview: The Official Pitch vs. The Reality

Anthropic officially launched Claude Opus 4.6 on February 5, 2026. For comprehensive details, refer to Anthropic's official announcement at https://www.anthropic.com/news/claude-opus-4-6. What's the big deal? Well, they're calling it their "smartest model ever". It's supposedly "state-of-the-art" (meaning super advanced) in many tests. The coolest part? It has a huge 1M token context window in beta. This basically means it can remember and understand a massive amount of information – like an entire book – all in one chat with you.

I've heard all the promises: it's supposed to be better at coding, planning complex tasks, and working reliably for big business projects. Sounds amazing, right? Like a dream come true for anyone building with AI.

But wait, there's a catch. When I looked at what people are actually saying online, things aren't so simple. Many users are really impressed, but I also found reports that Opus 4.6 sometimes "second-guesses itself." It can even make up information, or "hallucinate," when it faces new or unusual situations, according to some users on Reddit.

Table of Contents

Watch the Video Summary

A Closer Look: How It Plans and Remembers So Much

Okay, let's really dig into what makes this AI special. The biggest feature, without a doubt, is its 1M token context window in beta. For anyone who builds software or works with a lot of data, this is huge! It means Opus 4.6 can handle and remember a massive amount of information – more than ever before. So, if you're working on big coding projects or analyzing tons of documents, it won't forget what you told it. Imagine giving the AI a perfect memory for your whole project.

But it's not just about memory. Anthropic has also really improved Opus 4.6's ability to act like a smart assistant. We call these its agentic capabilities. Basically, it means the AI can plan, do, and adjust complex tasks with many steps all by itself. I've heard people say it plans "more carefully," keeps working on tasks "for longer," and is "more reliable" when dealing with huge amounts of code. So, this AI isn't just writing text; it's like having a super smart project manager helping you out.

If you're a developer using the API (that's how you connect your programs to the AI), Anthropic has added some smart new controls. For example, a feature called 'compaction' lets the AI summarize what it's working on. This helps it handle really long tasks without getting overwhelmed or 'forgetting' things. Also, there are new 'effort' controls. These let you decide if you want the AI to be super smart, super fast, or super cheap. You can actually tell it to 'think' less for easier tasks, which saves you money! In tests, Opus 4.6 got a great score of 65.4% on Terminal-Bench 2.0, according to Anthropic.

To further illustrate its capabilities, here are some key benchmark results:

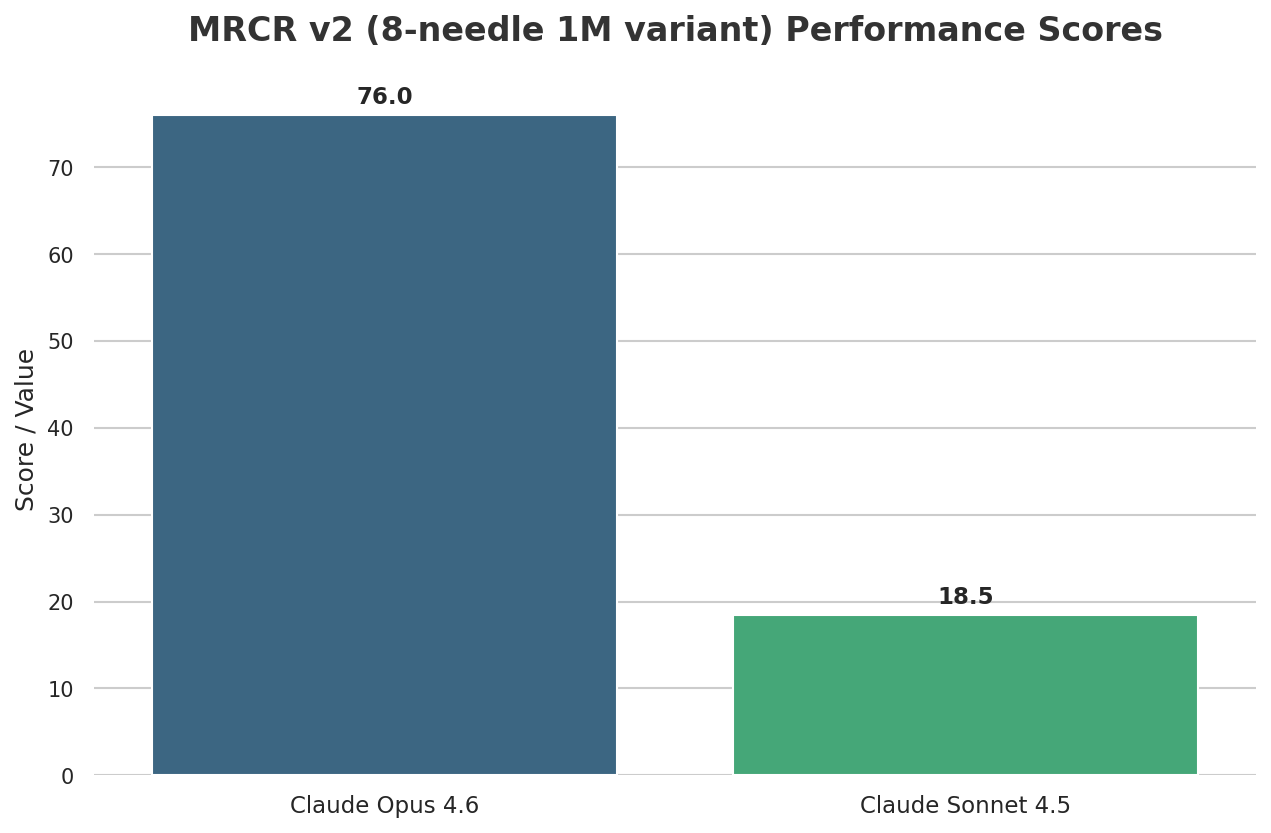

- MRCR v2 (8-needle 1M variant): Opus 4.6 scored 76%, demonstrating its superior ability to find hidden information in large contexts, a significant improvement over its predecessor Sonnet 4.5 which scored 18.5% under the same conditions.

- SWE-bench Verified: Claude Opus 4.6 achieved an impressive 80.84% on this benchmark, which evaluates AI models on real-world software engineering tasks.

- BigLaw Bench: For legal reasoning, Opus 4.6 achieved the highest score of any Claude model at 90.2%.

Opus 4.6 in Action: Real-World Applications

Beyond the benchmarks, Claude Opus 4.6 is already making a tangible impact in real-world scenarios for businesses and developers:

- Codebase Migration at SentinelOne: Gregor Stewart, Chief AI Officer at SentinelOne, reported that Claude Opus 4.6 handled a "multi-million-line codebase migration like a senior engineer," planning upfront, adapting its strategy, and finishing the task in half the time.

- Enhanced Spreadsheet Agents at Shortcut.ai: Nico Christie, Co-founder & CTO of Shortcut.ai, noted a significant performance jump, stating that "real-world tasks that were challenging for Opus [4.5] suddenly became easy" for their spreadsheet agents with Opus 4.6.

- Cybersecurity Vulnerability Detection: Anthropic's latest model has been credited with finding over 500 previously unknown high-severity security flaws in open-source libraries with minimal prompting, showcasing its advanced capabilities in defensive cybersecurity.

Real-World Wins: How It Helps Big Businesses and Coders

Here's where Opus 4.6 really stands out: in big, important projects for businesses and complex coding challenges. I've seen reports from companies who got to try it early. They say it can handle tasks that other AIs just couldn't figure out before.

For example, it got the highest score ever for a Claude model on the BigLaw Bench test, hitting an amazing 90.2%. This shows it's incredibly good at understanding legal stuff. So, it's not just answering simple questions; it's actually sifting through complicated legal papers and past cases.

In the world of online security, Opus 4.6 really showed what it's made of. It got the best results in 38 out of 40 investigations when compared to older Claude 4.5 models. That's pretty impressive! And for coders, I've heard it can move a "multi-million-line codebase" (that's a huge amount of code!) like a super experienced engineer. This is a massive job for any AI. This ability to work independently and precisely with so much code is a big step forward.

Quick Look: How It Performs, What It Costs, and Where You Can Get It

When we talk about how powerful it is, Opus 4.6 is a real champion. In tests like GDPval-AA (which looks at how well an AI handles important work, like in business), it actually beats the next best AI, OpenAI’s GPT-5.2, by about 144 Elo points. That's a big lead! This means it's much better at solving tough problems in areas like finance and law. Plus, it has something called 'hybrid reasoning.' This lets it adjust how deeply it 'thinks' depending on the task, which is a clever way to save energy and get things done efficiently.

So, what about the price? Opus 4.6 costs $5 for every million input tokens (that's what you put into the AI) and $25 for every million output tokens (what the AI gives back). This makes it a more expensive, 'premium' AI, which makes sense given how advanced it is. You can easily get it on claude.ai, through Anthropic's API (for developers), and on "all major cloud platforms." So, it's pretty easy for lots of different people to use.

| Metric | Claude Opus 4.6 | OpenAI GPT-5.2 (Estimated) |

|---|---|---|

| Input Cost (per 1M tokens) | $5 | ~$40 (8x higher) |

| Output Cost (per 1M tokens) | $25 | ~$200 (8x higher) |

| Terminal-Bench 2.0 Score (%) | 65.4% | <65.4% (Industry's next-best) |

Note: We've estimated the costs for GPT-5.2 based on what people are saying online. It seems Opus 4.6 is much cheaper for some tasks.

Opus 4.6: A Competitive Edge

In the rapidly evolving AI landscape, Claude Opus 4.6 carves out a competitive niche through several key differentiators:

- Context Window Parity with Gemini 1.5 Pro: With its 1 million token context window in beta, Opus 4.6 stands on par with Google's Gemini 1.5 Pro, allowing it to process and retain massive amounts of information—equivalent to thousands of pages of text or tens of thousands of lines of code—in a single prompt. This capability is crucial for deep analysis and long-running, complex projects.

- Innovative Agent Teams: A unique feature introduced with Opus 4.6 is "Agent Teams" within Claude Code. This allows multiple AI agents to collaborate in parallel on different aspects of a coding project, distributing work and significantly accelerating development cycles by preventing single-agent bottlenecks.

- Enhanced Self-Correction and Planning: Opus 4.6 demonstrates a qualitative shift in its ability to plan more carefully, review its own work, and autonomously troubleshoot and fix errors, particularly when encountering bugs in generated code. This self-correction mechanism contributes to higher reliability and reduced human intervention.

What People Are Really Saying: The Good, The Bad, and The Tricky Parts

I went through all the online forums so you wouldn't have to! What I found is that people have mixed feelings about Claude Opus 4.6. Some are amazed, but others are a bit skeptical. While the official tests make it look perfect, using it in real life shows some tricky limitations.

One thing I kept seeing is that "Opus 4.6 loves to second-guess itself." It also had problems making things up, or "hallucinating," when it faced brand new problems, especially in tests like EsoBench, according to online chats.

This "second-guessing" can make the AI slower and potentially more expensive, especially if it tries many times to find an answer. It just goes to show that even the most advanced AIs aren't perfect, especially when they're trying to solve brand new problems. These little quirks highlight a bigger challenge for using AI in really important situations. It reminds us of the concerns about needing a 'human touch' and how reliable AI is, which we talked about when we looked at how Zocks and Wealth.com use AI.

Here's something interesting: on the ARC-AGI leaderboard (a place where AIs are ranked), some users noticed that the "Max" version of Opus 4.6 actually scored a bit lower than the "high" version. This unexpected result tells us that just making an AI super powerful doesn't always mean it will do better on every tough thinking task.

How It Stacks Up Against Other AIs and What That Means for You

In today's super competitive AI world, Claude Opus 4.6 often goes head-to-head with other top AIs, like OpenAI’s GPT-5.2. While Opus 4.6 is clearly better in some areas, especially for planning tasks and big business projects, its cost is a huge difference.

As I mentioned before, people on Reddit are saying that Opus 4.6 can be "less than an eighth of the cost" of a fancy GPT-5.2 for certain jobs, especially on tests like ARC-AGI-1. This means it's much cheaper, which can be a game-changer for many developers and businesses, especially for tasks that run for a long time or involve a lot of data.

But beyond just comparing it to other AIs, it's important to remember some bigger challenges that all advanced AIs, including Opus 4.6, face. One major problem is that these models are often like a 'black box.' It's really hard to understand why an AI makes certain choices, especially when the stakes are high. Figuring this out is a big area of research right now and something super important to think about before you use AI in real-world situations. This challenge of understanding AI isn't just for Anthropic; it's a common worry for all powerful language models.

A Handy Tip and My Final Advice

So, should you start using Claude Opus 4.6 in your work? Here’s my advice: if you're dealing with complex tasks where the AI needs to plan and act on its own, or important business jobs like checking legal documents or moving huge amounts of code, or if you need an AI that can keep working on long, multi-step projects, then Opus 4.6 is a seriously powerful tool. Its improved planning and huge memory make it super strong for these kinds of specific situations.

However, be careful if you're trying to solve brand new problems where the AI might "second-guess" itself. Also, if your tasks are simpler and you're worried about the cost, you might find other AIs work better and are cheaper. My best tip for you: use the '/effort' setting. If Opus 4.6 seems to be thinking too hard on an easy job, just turn its effort down from 'high' to 'medium.' This will make it faster and cheaper without losing too much smarts. It's a really useful control that can make a big difference for your experience and your wallet.

My Final Thoughts: Is It Right for You?

After really digging into what Anthropic says, looking at all the cool tech, and hearing what everyone in the community thinks, my final take on Claude Opus 4.6 is pretty clear: it's a super powerful tool for advanced tasks where the AI needs to plan and act, and for big business projects. It truly shows amazing improvements in solving complex problems, understanding long conversations, and coding. This makes it a strong partner for experienced engineers and tech leaders in big companies.

But, you should definitely be aware of its higher price tag and that it has some limits when trying to solve brand new, unexplored problems. In these cases, it might show 'quirks' like second-guessing itself or making things up. If your main goal is strong, multi-step automation for tasks you already know well, Opus 4.6 is a top choice. However, if you're just a hobbyist, a content creator on a budget, or your tasks are simpler and more about exploring ideas, a cheaper AI like Claude Sonnet (or even a free, open-source option) might be a better fit. This way, you can avoid spending too much and prevent any headaches.

Frequently Asked Questions

-

Since it sometimes 'second-guesses' and makes things up, can I really trust Claude Opus 4.6 for important business tasks?

Opus 4.6 is really good at handling big, multi-step business tasks that are clearly defined. But when it faces brand new or unclear situations, its reliability might be a bit shaky. For super important jobs, you absolutely need to test it thoroughly and have a human check its work. This is especially true if its 'second-guessing' or small mistakes could cause big problems.

-

How can I use Claude Opus 4.6's cool features without spending too much money on my projects?

Opus 4.6 is a more expensive AI, but you can save money using its 'effort' controls. For easier tasks, just turn down the 'effort' setting. This will make it think less, work faster, and cost you less. For really complex, important tasks, its ability to handle huge amounts of information and plan things out can totally be worth the money. It can save you a lot of time in development and make your results much better.

-

Is Claude Opus 4.6 always better than OpenAI's GPT-5.2 for every advanced AI job?

Not always for every job. Opus 4.6 does have a clear lead in certain areas, like planning tasks and big business projects, and it's often cheaper for some jobs. However, the 'best' AI really depends on what you need it for, how much money you want to spend, and if you're okay with its reported 'quirks' when solving new problems. It's super important to compare both AIs based on what your project specifically needs.

Sources & References

- Claude Opus 4.6 \ Anthropic

- Claude Opus 4.6 \ Anthropic

- On the Biology of a Large Language Model

- Anthropic Economic Index report: Economic primitives \ Anthropic

- Designing AI resistant technical evaluations \ Anthropic

- [2404.03647] Capabilities of Large Language Models in Control Engineering: A Benchmark Study on GPT-4, Claude 3 Opus, and Gemini 1.0 Ultra

- Claude Opus 4.6 targets research workflows with 1M-token context window, improved scientific reasoning

- Opus 4.6 on Vending-Bench – Not Just a Helpful Assistant | Andon Labs

- Has anyone provided a in-depth analysis on WHY Claude 4.5 Opus is so good? (Comment by 'wayji')

- Reddit Thread #1: Claude Opus 4.6 achieves highest ARC-AGI scores for non-refined models so far.

- ARC Prize Leaderboard

Yousef S. | Latest AI

AI Automation Specialist & Tech EditorYousef S. is a seasoned AI Automation Specialist and Tech Editor with over 5 years of hands-on experience in deploying and optimizing large language models for enterprise solutions. He specializes in evaluating frontier AI models like Claude Opus 4.6, providing practical insights into their real-world performance, integration challenges, and strategic value for businesses.